A Smart Contract

Development Stack, Part II: Game Theoretical Aspects

Game theory is “the study of

mathematical models of conflict and cooperation between intelligent rational

decision-makers” [Wikipedia]. In particular, it looks at how rules in

cooperative processes (“games”) impact the outcome, and also how the parties

taking part in the game can cheat, i.e., exploit the rules for their own

benefit.

In my previous post I sketched some ideas of how smart

contracts can be expressed declaratively to avoid low-level mistakes, and also

emphasised that techniques from functional safety, such as model

checking, can be used to ensure properties of (state-based) contracts.

However, as Björn Engelmann pointed out to me, many (smart) contracts are, by

definition, cooperative processes, which is why they are susceptible to

“game-theoretical” exploits. In this post I will mention a few, and show some

ideas how they can be remedied.

A First Example: Sybil

Attacks

A sybil attack is

one where a reputation-based system is subverted by one (real-world) party

creating loads of fake (logical) identities who then behave in accordance with

the real world party’s goals. For example, consider a decision that is based on

majority vote. An attacker could create lots of additional parties and thereby

taking over the majority, leading to a decision in the interest of the

attacker. This attack only works, of course, if it is at all possible to

include additional parties in the set of decision makers, but this kind of

dynamic group is certainly not rare. So what can be done to prevent it?

Vote in

One thing that can be done is

to require that new parties cannot just join, but have to be voted in by the

existing members. It would then be there task to ensure, through means outside

the contract, that a join request is valid in the sense that is does not

original from a malicious real-world entity. The last example in my previous post showed this process. However, ensuring

this validity can be a lot of effort, risking that it isn’t done consistently.

It can also be exploited by the existing decision makers to keep unwanted

additional parties out, reducing the openness of the overall process (if that

openness is required in the first place).

Rate Limitations

Another approach to reducing

the risk from Sybil attacks is to limit the rate at which new parties can

request to join the process. To implement this declaratively, I have

implemented a facility by which the rate of commands that come into an

interactor (read: the events that come into a state machine) can be limited.

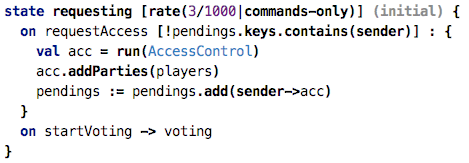

The following code expresses that while the machine is in state requesting,

only three commands per second are allowed (obviously a value that is useful

only for testing). If more requests come in, they are rejected.

The Interceptor Framework

The rate keyword

shown above is an example of an interceptor. Interceptors are associated with a

state (or with a parent state in a hierarchical state machine). Every trigger

or variable access that enters the machine is first passed to all applicable

interceptors (they are collected by walking up the state hierarchy). The

interceptor can observe the command or variable access and decide what to do

about it:

· It can let the request through unchanged,

· It can make arbitrary changes to the

request,

· Or it can block the request completely.

In the example above, the request

is completely blocked if the frequency of requests exceeds the one specified in

the rate interceptor. Note that interceptors are

different from guard conditions because they apply, hierarchically, to all

transitions in a state, and they can also maintain their own state . The rate

interceptor maintains a counter of the number of requests in the given timespan

to decide about rejection.

Interceptors are a bit

similar to Solidity’s Function Modifiers, although interceptors are

associated with states and not single functions.

I have implemented several other

interceptors that can all be used to help with the game theoretical nature of

smart contracts. More examples follow below, after we have introduced context

arguments.

Context Arguments

A command entering an

interactor (e.g., a triggering event entering a statemachine) can have

arguments. For example, when triggering a buyevent, that event may carry

the price the client may want to pay for whatever they

buy. Guard conditions can take the arguments into account.

In addition, I have extended

interactors to support context arguments. They are essentially

a second list of arguments that are different from the regular arguments in the

following way:

· They are optional in the sense that an

interceptor decides whether they are required or not for a given command

· For the client, special syntax exists to

supply values for the arguments without explicitly mentioning it for each

command.

For (Ethereum-style) smart

contracts, an obvious context argument is the sender of a message;

this allows the contract to make decisions about the validity of a command and

also to ensure that the sender’s account pays the transaction fees.

We are now ready to look at

the next interceptor.

The SenderIs interceptor

It is rather obvious that,

for contracts to be valid, one often has to check that commands come from a

limited set of parties. To continue with the example above, only the set of

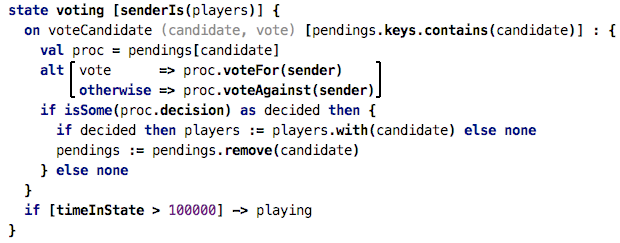

already-voted-in decision makers can take part in a vote. The following code

enforces this by using the senderIs interceptor:

This interceptor takes as an

argument a collection of parties, and for every command or variable read that

comes in, it checks that the sender is in the list of

parties (players in the example above). If it isn’t, the

request fails. It also fails if no sender context argument is

supplied by the client at all.

Note how within a state that

(transitively) has a senderIs interceptor, a

variable sender is available that

refers to whoever sent this command; interceptors that enforce (by otherwise

failing) that a given context argument is specified can also make available

special variables with which that context argument can be accessed to make

further decisions in the implementation. In the example above, the sender variable

is passed into a multi-party boolean decision (as explained in the previous post) that handles the actual decision of whether

the requesting sender should be allowed in.

Obviously, one could perform

the validation of the sender manually in every guard condition of every

transition; but as you can tell from the words “manually” and “every”, this is

tedious and error prone, and should thus be avoided.

Turn-by-Turn Games

Many “games” require a fair

allocation of opportunities to participating parties. One way of achieving this

is to run a game turn-by-turn, where each party can make one “move” in every

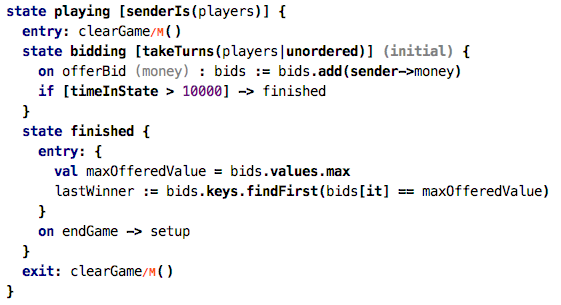

“round”. In my example, I have a bidding process:

Note how the bidding state

uses a takeTurns interceptor. Again, it takes as an argument a

list of parties which have to take turns. You can configure how strict the

turn-by-turn policy should be enforced. Unordered means that in each

round, everybody has to make a move, but the order is not relevant. Ordered means

that the order given my the list of parties passed to the interceptor is

strictly enforced. A violation leads to a failure of the command. The

interceptor also provides access to the list of allowed next movers; this could

potentially be used to notify parties that it’s their turn.

Now, there is a risk of a

denial of service attack: assume ordered mode, and the next

party P just doesn’t make its move: the whole process is stuck. Nobody

else can make a move because it’s P’s turn. But P just

doesn’t do anything. This is why a turn-by-turn game should always include a

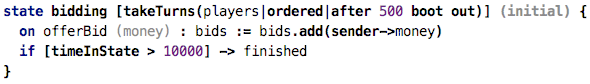

timeout:

In the refined example above,

we specify a timeout of 500. If the next party does not make their move within

500 time units after the previous one, that party is removed from the game. It

then becomes the another party’s turn. It’s also possible to just skip the

“sleeping” party and give the turn to the next one in line. Further policies

can be imagined, such as skip three times and then remove, or whatever.

Summary

When I chatted with Björn

about safety of smart contracts and mentioned model checking, he said that he

doesn’t know how to do model checking for game-theoretical properties.

Obviously I don’t know either. But instead of checking, another way to reduce

the risks is to support correctness-by-construction, i.e., make it very simple

to define contracts that do not have a particular class of risks, for example

by providing declarative means for things like authorisation, rate limiting or

turn-by-turn games. This is what I have shown in this post.

It should also become more

and more obvious why higher-level abstractions (compared to a “normal”

programming language) are needed to efficiently express safe smart contracts.

Trying to implement all of these things “with normal code” or just with

libraries will lead to many low-level mistakes. DSLs are really very useful

here.